Conversation

There was a problem hiding this comment.

Pull request overview

Adds a chat performance benchmarking harness (perf regression + memory leak check) under scripts/chat-simulation/, along with a CI workflow and docs to run/interpret the benchmarks.

Changes:

- Introduces a Playwright-driven chat perf regression runner with baseline comparison and CI Markdown summaries.

- Adds a state-based chat “memory leak” checker and supporting mock LLM server/scenario fixtures.

- Wires new npm scripts (

perf:chat,perf:chat-leak), a scheduled/dispatchable GitHub Actions workflow, and a skill doc.

Show a summary per file

| File | Description |

|---|---|

| scripts/chat-simulation/test-chat-perf-regression.js | New perf benchmark runner with trace/profiling + baseline comparison and CI summary output. |

| scripts/chat-simulation/test-chat-mem-leaks.js | New state-based leak checker cycling scenarios and measuring post-GC heap/DOM deltas. |

| scripts/chat-simulation/common/utils.js | Shared helpers for config, build download, launching VS Code via CDP, stats, and pre-seeding storage. |

| scripts/chat-simulation/common/mock-llm-server.js | Local deterministic mock LLM server supporting streaming + multi-turn/tool-call scenarios. |

| scripts/chat-simulation/common/perf-scenarios.js | Scenario catalog (content/tool-call/multi-turn) used by the perf and leak scripts. |

| scripts/chat-simulation/config.jsonc | Default settings for runs/thresholds/baseline version/leak thresholds. |

| scripts/chat-simulation/fixtures/* | Fixture TS files used as deterministic “tool read/edit” targets in scenarios. |

| package.json | Adds perf:chat and perf:chat-leak npm scripts. |

| .github/workflows/chat-perf.yml | Adds CI workflow to run the benchmark (matrix) and optionally leak check. |

| .github/skills/chat-perf/SKILL.md | Documents how to run/interpret the benchmarks. |

| build/filters.ts | Excludes *.jsonc from the copyright header filter. |

| .gitignore | Ignores .chat-simulation-data output directory. |

Copilot's findings

- Files reviewed: 18/19 changed files

- Comments generated: 6

| function preseedStorage(userDataDir) { | ||

| const globalStorageDir = path.join(userDataDir, 'User', 'globalStorage'); | ||

| fs.mkdirSync(globalStorageDir, { recursive: true }); | ||

| const dbPath = path.join(globalStorageDir, 'state.vscdb'); | ||

| execSync(`sqlite3 "${dbPath}" "CREATE TABLE IF NOT EXISTS ItemTable (key TEXT UNIQUE ON CONFLICT REPLACE, value BLOB); INSERT INTO ItemTable (key, value) VALUES ('builtinChatExtensionEnablementMigration', 'true'); INSERT INTO ItemTable (key, value) VALUES ('chat.tools.global.autoApprove.optIn', 'true');"`); | ||

| } |

There was a problem hiding this comment.

preseedStorage shells out via execSync with an interpolated file path. Since userDataDir includes runId (which comes from scenario IDs/CLI input), a runId containing quotes or shell metacharacters can break quoting and potentially lead to command injection or simply failing to preseed storage. Prefer spawnSync('sqlite3', [dbPath, sql], { shell: false }) (or equivalent) and/or validate/sanitize runId before it becomes part of a shell command.

| const opts = { | ||

| iterations: CONFIG.iterations ?? 3, | ||

| messages: CONFIG.messages ?? 5, | ||

| verbose: false, | ||

| /** @type {string | undefined} */ | ||

| build: undefined, | ||

| leakThresholdMB: CONFIG.leakThresholdMB ?? 5, | ||

| }; |

There was a problem hiding this comment.

parseArgs() defines messages (and the help text advertises --messages), but the rest of the script never uses opts.messages. This makes the CLI misleading and suggests either a missing feature or dead code. Either wire messages into the leak-check behavior, or remove the flag/config entry and update the usage docs accordingly.

| Launches one VS Code session, sends N messages sequentially, forces GC between each, and measures renderer heap and DOM node count. Uses **linear regression** on the samples to compute per-message growth rate, which is compared against a threshold. | ||

|

|

||

| ### Key flags | ||

|

|

||

| | Flag | Default | Description | | ||

| |---|---|---| | ||

| | `--messages <n>` / `-n` | `10` | Number of messages to send. More = more accurate slope. | | ||

| | `--build <path\|ver>` / `-b` | local dev | Build to test. | | ||

| | `--threshold <MB>` | `2` | Max per-message heap growth in MB. | | ||

| | `--verbose` | — | Print per-message heap/DOM counts. | | ||

|

|

||

| ### What it measures | ||

|

|

||

| - **Heap growth slope** (MB/message) — linear regression over forced-GC heap samples. A leak shows as sustained positive slope. | ||

| - **DOM node growth** (nodes/message) — catches rendering leaks where elements aren't cleaned up. Healthy chat virtualizes old messages so node count plateaus. | ||

|

|

||

| ### Interpreting results | ||

|

|

||

| - `0.3–1.0 MB/msg` — normal (V8 internal overhead, string interning) | ||

| - `>2.0 MB/msg` — likely leak, investigate retained objects | ||

| - DOM nodes stable after first message — normal (chat list virtualization working) | ||

| - DOM nodes growing linearly — rendering leak, check disposable cleanup |

There was a problem hiding this comment.

The leak-check documentation describes a message-based approach (N messages, slope MB/message, etc.), but the implementation in test-chat-mem-leaks.js is iteration/state-based (cycle through all scenarios per iteration, then open a new chat and compare). Please update this doc to reflect the current behavior/flags (e.g. --iterations, total residual MB threshold) so readers don’t run the wrong command or misinterpret results.

| Launches one VS Code session, sends N messages sequentially, forces GC between each, and measures renderer heap and DOM node count. Uses **linear regression** on the samples to compute per-message growth rate, which is compared against a threshold. | |

| ### Key flags | |

| | Flag | Default | Description | | |

| |---|---|---| | |

| | `--messages <n>` / `-n` | `10` | Number of messages to send. More = more accurate slope. | | |

| | `--build <path\|ver>` / `-b` | local dev | Build to test. | | |

| | `--threshold <MB>` | `2` | Max per-message heap growth in MB. | | |

| | `--verbose` | — | Print per-message heap/DOM counts. | | |

| ### What it measures | |

| - **Heap growth slope** (MB/message) — linear regression over forced-GC heap samples. A leak shows as sustained positive slope. | |

| - **DOM node growth** (nodes/message) — catches rendering leaks where elements aren't cleaned up. Healthy chat virtualizes old messages so node count plateaus. | |

| ### Interpreting results | |

| - `0.3–1.0 MB/msg` — normal (V8 internal overhead, string interning) | |

| - `>2.0 MB/msg` — likely leak, investigate retained objects | |

| - DOM nodes stable after first message — normal (chat list virtualization working) | |

| - DOM nodes growing linearly — rendering leak, check disposable cleanup | |

| Launches one VS Code session, runs the full leak scenario set for a configured number of iterations, forces cleanup/GC between states, then opens a fresh chat and compares the resulting renderer heap and DOM node count against the initial baseline. This check is **iteration/state-based**, not message-slope-based: it looks for residual memory or DOM growth that remains after repeated scenario cycles. | |

| ### Key flags | |

| | Flag | Default | Description | | |

| |---|---|---| | |

| | `--iterations <n>` / `-n` | `10` | Number of full scenario cycles to run before comparing against a fresh chat. Higher values make retained-state leaks easier to reproduce. | | |

| | `--build <path\|ver>` / `-b` | local dev | Build to test. | | |

| | `--threshold <MB>` | `2` | Max allowed **total residual heap growth** in MB after all iterations complete. | | |

| | `--verbose` | — | Print per-iteration heap/DOM counts and the final residual comparison. | | |

| ### What it measures | |

| - **Residual heap growth** (MB total) — how much renderer heap remains above baseline after running all scenarios repeatedly and then starting a new chat. A leak shows up as memory that does not return close to baseline. | |

| - **Residual DOM growth** (nodes total) — catches rendering leaks where chat elements or listeners remain retained after scenario cleanup. Healthy behavior should return close to the original DOM footprint when a fresh chat is opened. | |

| ### Interpreting results | |

| - Small residual deltas after the final fresh-chat comparison — normal (temporary allocations were cleaned up) | |

| - Residual heap above the configured `--threshold` — likely leak, investigate retained chat/session state | |

| - DOM node count returning near baseline — normal (chat UI state was disposed correctly) | |

| - DOM node count staying elevated after the final fresh-chat comparison — rendering leak, check disposable cleanup and retained view state |

| on: | ||

| pull_request: | ||

| paths: | ||

| - '.github/workflows/chat-perf.yml' |

There was a problem hiding this comment.

The pull_request.paths filter only includes the workflow file itself, so this workflow will not run on PRs that change chat code. This conflicts with the intent described in the skill doc (“gate PRs that touch chat UI code”). If the goal is PR gating, broaden the paths filter (or remove it) to include the relevant chat/workbench files or the chat-simulation scripts.

| - '.github/workflows/chat-perf.yml' | |

| - '.github/workflows/chat-perf.yml' | |

| - 'src/vs/workbench/contrib/chat/**' | |

| - 'src/vs/workbench/contrib/inlineChat/**' | |

| - 'src/vs/workbench/contrib/chatInput/**' |

| const cur = current[group]?.[metric]; | ||

| const bas = base[group]?.[metric]; | ||

| if (!cur || !bas || !bas.median) { continue; } | ||

| const change = (cur.median - bas.median) / bas.median; |

There was a problem hiding this comment.

In baseline comparison, the check if (!cur || !bas || !bas.median) treats a baseline median of 0 as “missing” and skips the metric. Some metrics (e.g. counts) can legitimately have a median of 0, which would prevent regressions from being detected. Use an explicit null/undefined check for bas.median (and cur.median) instead of a truthiness check.

| offset: 1, | ||

| limit: 50, |

There was a problem hiding this comment.

multi-turn-user's read-file tool call uses offset/limit, but Copilot's read_file tool schema expects startLine/endLine (1-indexed). With the current arguments the tool invocation is likely to be rejected or ignored, which would invalidate this scenario's behavior/perf results.

| offset: 1, | |

| limit: 50, | |

| startLine: 1, | |

| endLine: 50, |

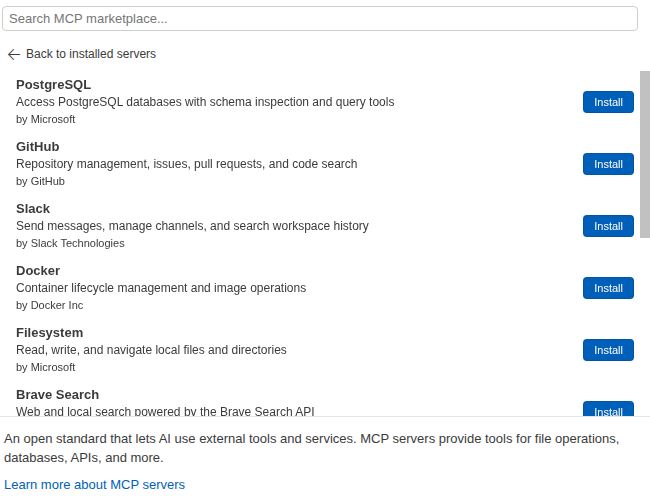

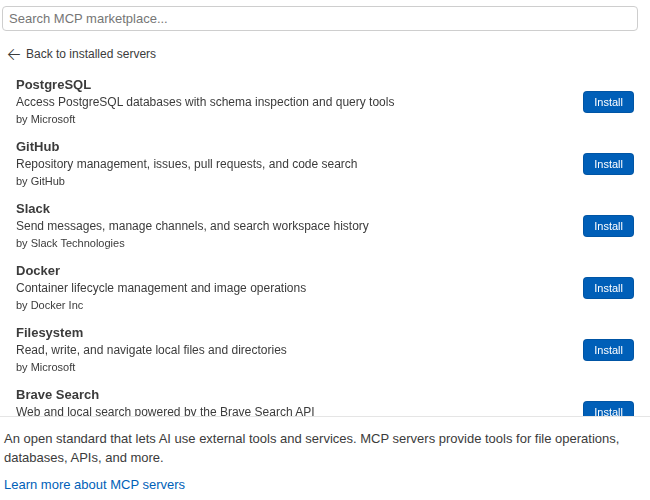

Screenshot ChangesBase: Changed (2)blocks-ci screenshots changedReplace the contents of Updated blocks-ci-screenshots.md<!-- auto-generated by CI — do not edit manually -->

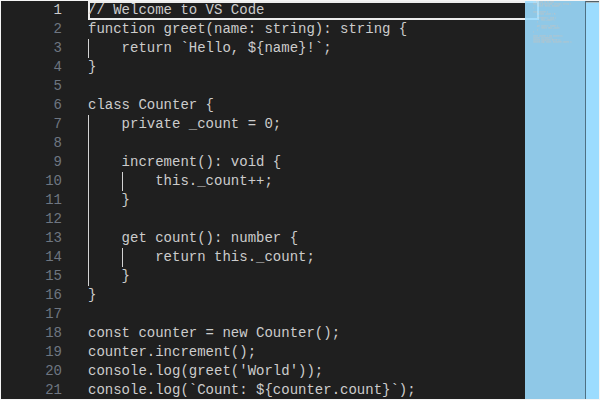

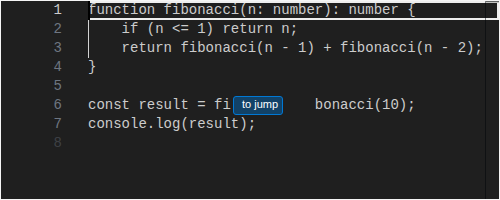

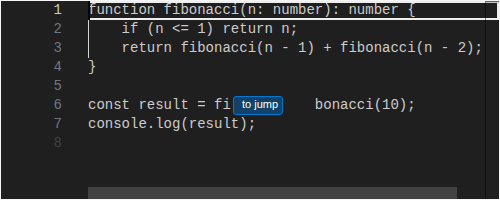

#### editor/codeEditor/CodeEditor/Dark

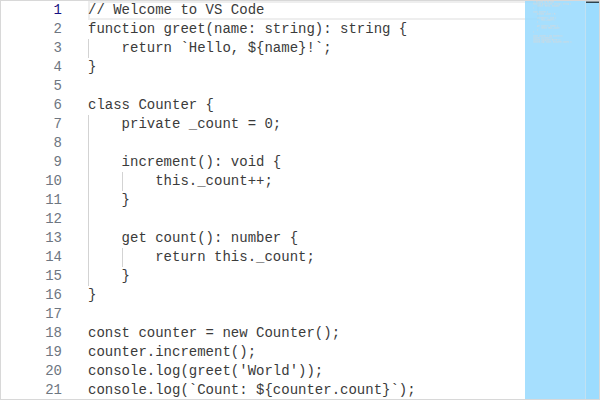

#### editor/codeEditor/CodeEditor/Light

|

No description provided.