Conversation

Screenshot ChangesBase: Changed (2)blocks-ci screenshots changedReplace the contents of Updated blocks-ci-screenshots.md<!-- auto-generated by CI — do not edit manually -->

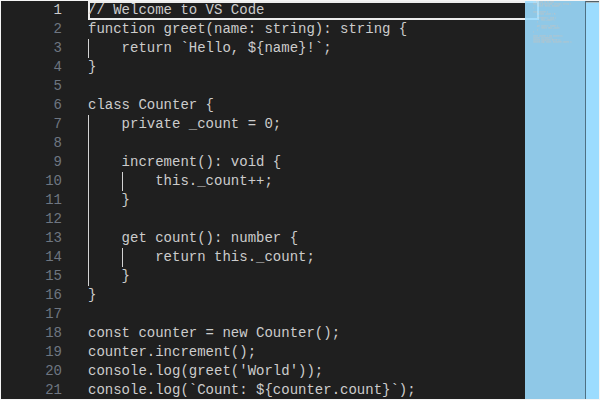

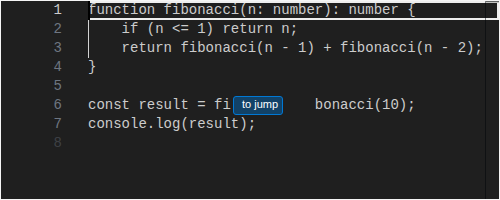

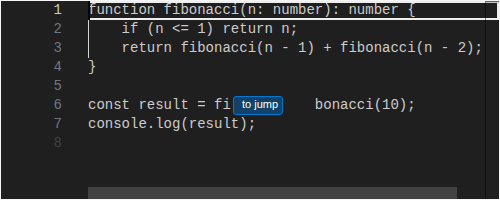

#### editor/codeEditor/CodeEditor/Dark

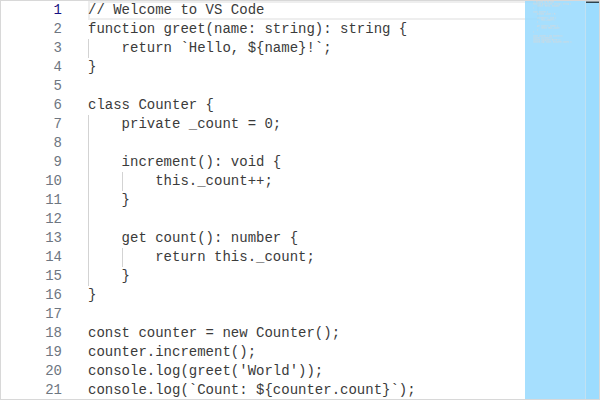

#### editor/codeEditor/CodeEditor/Light

|

There was a problem hiding this comment.

Pull request overview

Adds a chat performance benchmarking harness to the VS Code repo, including a mock LLM server, scenario definitions, local CLI entrypoints, and a GitHub Actions workflow to run comparisons (and optional leak checks) in CI.

Changes:

- Introduce chat perf regression runner + memory leak checker scripts under

scripts/chat-simulation/. - Add mock LLM server + scenario registry (content, tool-call, multi-turn) and supporting fixtures/config.

- Wire up new

npm run perf:chat/perf:chat-leakscripts and add a CI workflow + docs for running the benchmarks.

Show a summary per file

| File | Description |

|---|---|

| scripts/chat-simulation/test-chat-perf-regression.js | New Playwright-based perf runner collecting timing/rendering/memory metrics and comparing vs a baseline build/JSON. |

| scripts/chat-simulation/test-chat-mem-leaks.js | New state-based leak checker that cycles scenarios and measures post-GC heap + DOM node deltas. |

| scripts/chat-simulation/common/utils.js | Shared utilities for build resolution, VS Code launch, stats, and config handling. |

| scripts/chat-simulation/common/mock-llm-server.js | Local SSE mock server implementing Copilot/CAPI-like endpoints and scenario-based responses. |

| scripts/chat-simulation/common/perf-scenarios.js | Defines and registers benchmark scenarios (content, tool-call, multi-turn) used by the runners. |

| scripts/chat-simulation/config.jsonc | Default configuration for perf regression and leak thresholds. |

| scripts/chat-simulation/fixtures/*.ts | Fixture TS files used as deterministic tool-call read/edit targets for scenarios. |

| package.json | Adds perf:chat and perf:chat-leak npm scripts. |

| .github/workflows/chat-perf.yml | New CI workflow to run perf comparisons (matrix-split scenarios) and optional leak checks. |

| .github/skills/chat-perf/SKILL.md | Documentation for running the new perf + leak tools and interpreting results. |

| .gitignore | Ignores .chat-simulation-data output directory. |

| build/filters.ts | Excludes *.jsonc from the copyright filter (to allow the new config). |

Copilot's findings

- Files reviewed: 18/19 changed files

- Comments generated: 5

|

|

||

| // -- Save baseline --------------------------------------------------- | ||

| if (opts.saveBaseline) { | ||

| if (!opts.baseline) { console.error('[chat-simulation] --save-baseline requires --baseline <path>'); process.exit(1); } |

There was a problem hiding this comment.

--save-baseline can overwrite the baseline JSON generated earlier in the run: opts.baseline is set to the downloaded baseline build’s JSON path, so --save-baseline will end up writing the test results into the baseline file. This breaks subsequent comparisons/caching. Consider requiring an explicit --baseline <path> argument for --save-baseline (separate from --baseline-build) and writing the saved baseline to that user-provided path (or a dedicated --save-baseline-as).

| if (!opts.baseline) { console.error('[chat-simulation] --save-baseline requires --baseline <path>'); process.exit(1); } | |

| if (!opts.baseline) { console.error('[chat-simulation] --save-baseline requires --baseline <path>'); process.exit(1); } | |

| if (opts.baselineBuild) { | |

| console.error('[chat-simulation] --save-baseline cannot be used with --baseline-build; pass an explicit --baseline <path> to save a new baseline'); | |

| process.exit(1); | |

| } |

| if (!cur || !bas || !bas.median) { continue; } | ||

| const change = (cur.median - bas.median) / bas.median; | ||

| const pct = `${change > 0 ? '+' : ''}${(change * 100).toFixed(1)}%`; |

There was a problem hiding this comment.

Baseline comparison skips metrics when the baseline median is 0 because of !bas.median. Several count-based metrics (layouts/style recalcs/long tasks) can legitimately have a median of 0, which would silently exclude them from regression detection. Use an explicit null/undefined check (and handle division-by-zero for percent change) instead of a truthiness check.

| if (!cur || !bas || !bas.median) { continue; } | |

| const change = (cur.median - bas.median) / bas.median; | |

| const pct = `${change > 0 ? '+' : ''}${(change * 100).toFixed(1)}%`; | |

| if (!cur || !bas || cur.median === null || cur.median === undefined || bas.median === null || bas.median === undefined) { continue; } | |

| const change = bas.median !== 0 | |

| ? (cur.median - bas.median) / bas.median | |

| : (cur.median === 0 ? 0 : Number.POSITIVE_INFINITY); | |

| const pct = Number.isFinite(change) | |

| ? `${change > 0 ? '+' : ''}${(change * 100).toFixed(1)}%` | |

| : '+∞%'; |

| console.log(` [ext-host] Error collecting metrics: ${err}`); | ||

| } | ||

| } finally { | ||

| extHostInspector.close(); |

There was a problem hiding this comment.

The extension-host inspector is closed in the inner finally without guarding against errors. If close() throws (e.g., WS already closed), the whole run will fail and metrics/diagnostics are lost. Wrap this close() in a try/catch (and/or set extHostInspector = null after closing) to keep cleanup best-effort.

| extHostInspector.close(); | |

| try { | |

| extHostInspector.close(); | |

| } catch (err) { | |

| if (verbose) { | |

| console.log(` [ext-host] Error closing inspector: ${err}`); | |

| } | |

| } |

| const opts = { | ||

| iterations: CONFIG.iterations ?? 3, | ||

| messages: CONFIG.messages ?? 5, | ||

| verbose: false, | ||

| /** @type {string | undefined} */ | ||

| build: undefined, | ||

| leakThresholdMB: CONFIG.leakThresholdMB ?? 5, | ||

| }; |

There was a problem hiding this comment.

--messages is parsed and documented as controlling “messages to send per iteration”, but it is never used. The leak check currently always cycles through all scenarios once per iteration. Either remove the flag/help text, or implement it (e.g., limit scenarios per iteration or repeat a subset) so CLI behavior matches documentation.

| /** @param {string} text */ | ||

| function stripJsoncComments(text) { return text.replace(/\/\/.*/g, '').replace(/\/\*[\s\S]*?\*\//g, ''); } | ||

|

|

||

| /** | ||

| * Load a namespaced section from config.jsonc. | ||

| * @param {string} section - Top-level key (e.g. 'perfRegression', 'memLeaks') | ||

| * @returns {Record<string, any>} | ||

| */ | ||

| function loadConfig(section) { | ||

| const raw = fs.readFileSync(path.join(__dirname, '..', 'config.jsonc'), 'utf-8'); | ||

| const config = JSON.parse(stripJsoncComments(raw)); | ||

| return config[section] ?? {}; |

There was a problem hiding this comment.

stripJsoncComments() uses regexes to remove // and /* */ comments. This is not a safe JSONC parser (it will also strip comment-like sequences inside strings, e.g. "https://..."), which can make config parsing brittle. Since the repo already depends on jsonc-parser, consider using it to parse config.jsonc instead of regex stripping.

No description provided.