…etail

Surfaces the existing CLI resume mechanics (--resume, --rerun-failed,

--retry-errors, --output) in Studio so users can finish an interrupted

run from the web UI instead of dropping to a terminal.

Server:

- RunEvalRequest accepts resume / rerun_failed / retry_errors / output.

- buildCliArgs translates them to the corresponding CLI flags.

- Mutual-exclusivity validation returns 400 with a usable error.

- Read-only guard now also covers /api/benchmarks/:id/eval/run.

- handleRunDetail returns run_dir + suite_filter (from benchmark.json's

metadata.eval_file) for local runs so the UI can target the same

workspace.

UI:

- New ResumeRunActions component renders "Resume run" + "Rerun failed

cases" buttons on /runs/:runId (and the benchmark-scoped variant)

when at least one row has executionStatus === 'execution_error'.

- Hidden in read-only mode; disabled with an explanatory tooltip when

run_dir or suite_filter cannot be resolved (e.g. remote runs).

- After launch, navigates to /jobs/:runId for live progress.

- Pure helpers (shouldShowResumeActions, buildResumeRequestBody)

are unit-tested without rendering React.

Closes #1219

Co-Authored-By: Claude Opus 4.7 <noreply@anthropic.com>

Closes #1219

Summary

Surfaces the existing CLI resume mechanics (

--resume,--rerun-failed,--retry-errors,--output) in Studio so users can finish an interrupted or partially-errored run from the web UI instead of dropping to a terminal.Changes

Server (

apps/cli/src/commands/results/)RunEvalRequestacceptsresume/rerun_failed/retry_errors/output(snake_case wire format).buildCliArgstranslates these into--resume,--rerun-failed,--retry-errors <path>,--output <dir>.validateResumeOptionsreturns 400 with a usable message when the three modes are combined./api/benchmarks/:id/eval/run(was missing before).handleRunDetailreadsbenchmark.jsonfor the run and exposesrun_dir(relative to cwd) andsuite_filter(frommetadata.eval_file) so the UI can target the same workspace. Local runs only — remote runs in the results-repo cache cannot be resumed in place.UI (

apps/studio/src/)ResumeRunActionscomponent renders "↻ Resume run" + "Rerun failed cases" buttons on/runs/:runIdand/benchmarks/:id/runs/:runIdwhen at least one row hasexecutionStatus === 'execution_error'.run_dirorsuite_filtercannot be resolved./api/eval/run, navigates to/jobs/:runIdfor live progress.shouldShowResumeActions,buildResumeRequestBody) are unit-tested without rendering React.Tests added

apps/cli/test/commands/results/serve.test.ts— request/preview shaping for resume / rerun_failed / retry_errors, mutual-exclusivity 400s, read-only 403 (unscoped + benchmark-scoped),run_dir+suite_filterexposure on/api/runs/:filename. 53 tests in this file.apps/studio/src/components/resume-run-helpers.test.ts— visibility logic and request-body shape for both modes (incl. read-only hides, missing target omitted). 7 tests.Test plan

bun run test— 2337 tests passbun run typecheck— cleanbun run lint— cleanexecution_error, click-through)Red / Green UAT — synthetic fixture

Hand-crafted run workspace with one

execution_status: execution_errorrow + abenchmark.jsonwhosemetadata.eval_filepoints at a known eval YAML. Confirms wire contract + UI surface acrossmain, this branch, and--read-only.Red —

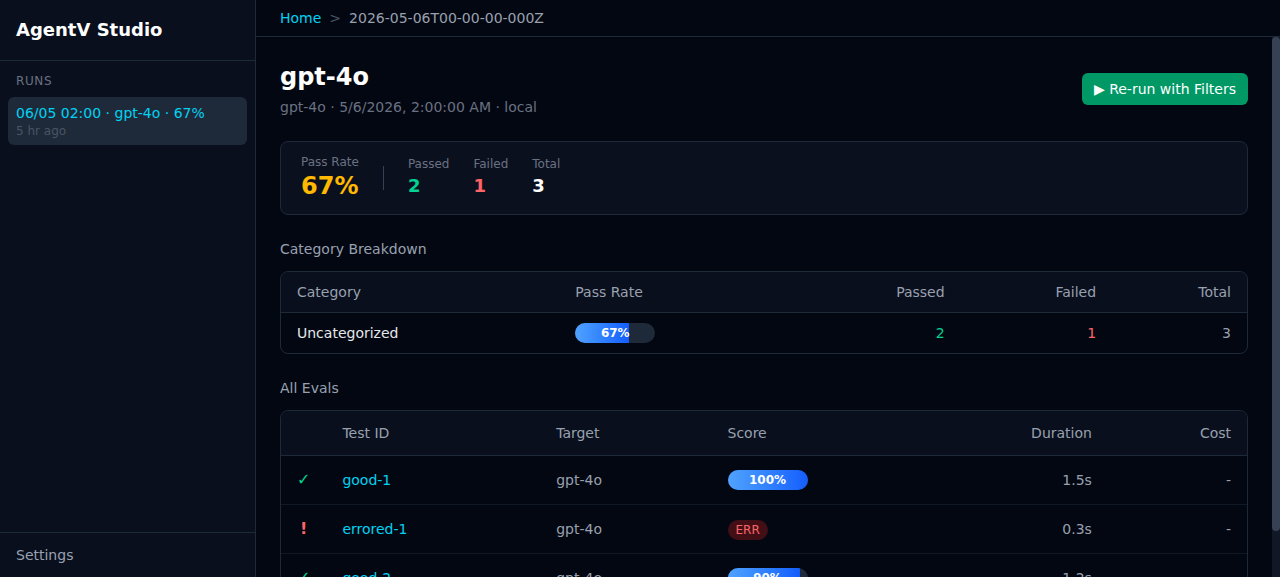

mainGET /api/runs/:filenamedoes not includerun_dir/suite_filter. Run detail page exposes only "▶ Re-run with Filters":Green — this branch

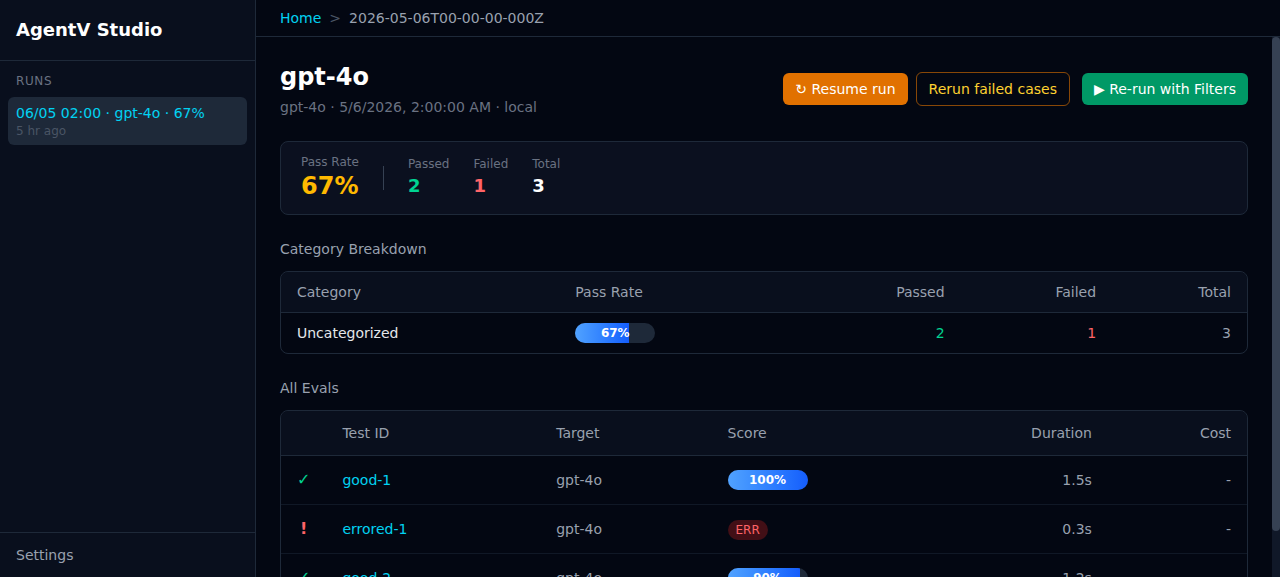

GET /api/runs/:filenamereturns:UI renders the new actions:

/api/eval/previewproduces the expected CLI invocations and validation rejects mode combos with 400. Read-only mode hides both buttons and the API still returns 403.Live e2e UAT — real Azure OpenAI run

Built a 2-test eval, ran it with

--budget-usd 0.000001 --workers 1to deliberately trigger oneexecution_error(budget_exceeded on the second test). Then opened the run in Studio and clicked Rerun failed cases.Eval definition (

tiny.eval.yaml):Initial state (

index.jsonl, before button click):UI snapshot at

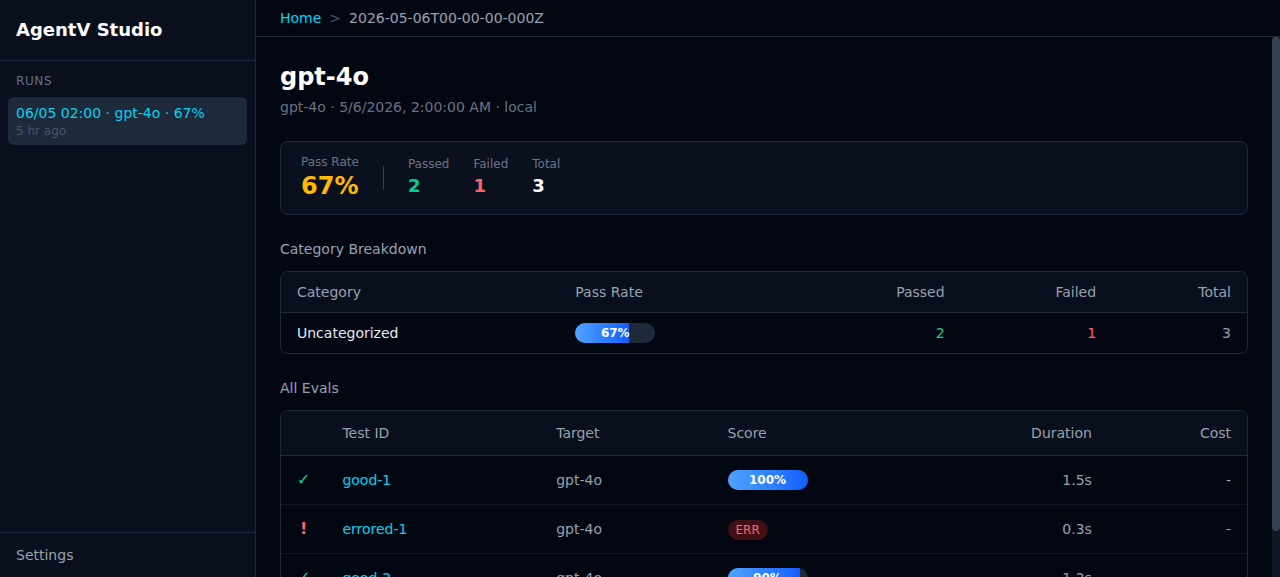

/runs/2026-05-06T05-36-19-075Z— both new buttons visible, header shows 50% pass rate:Click "Rerun failed cases" → browser navigated to

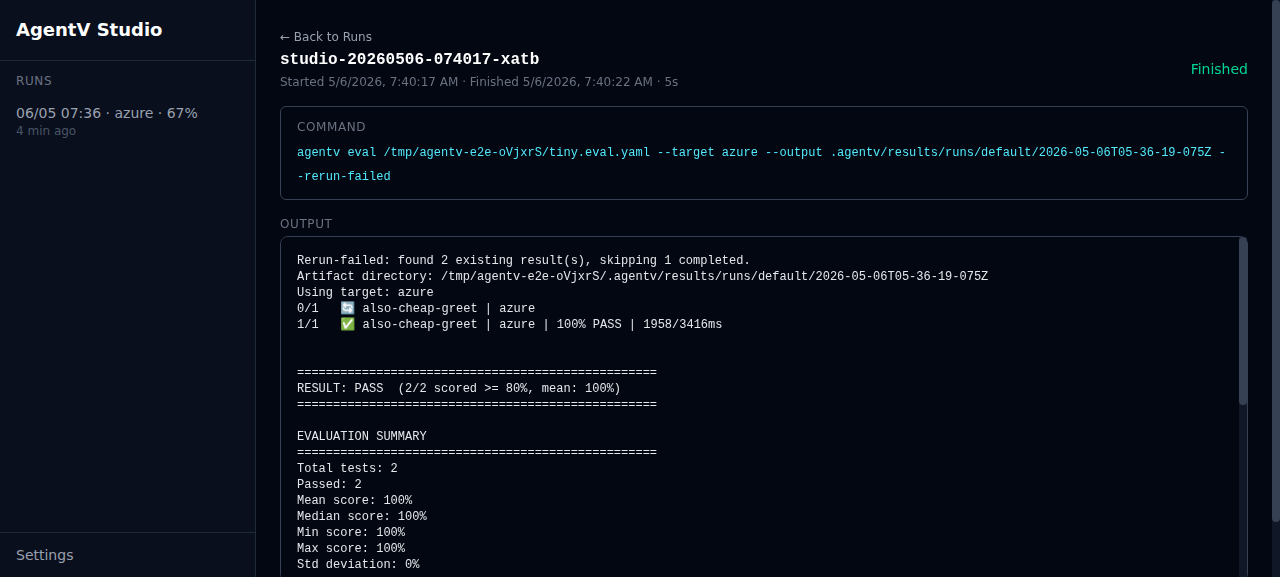

/jobs/studio-20260506-074017-xatb. The Studio job tracker showed statusrunningthenfinishedwith exit code 0. Spawned CLI command (returned in the launch response):Final state (

index.jsonl, after rerun finished):Acceptance: previously-passing test was skipped (timestamp on

cheap-greetunchanged); errored test was re-run and now passes; pass rate updated from 50% → 67% in the Studio header.Pre-existing bug discovered

The live e2e surfaced an unrelated bug in

resolveCliPath(off-by-one in thecurrentDirfallback path) which prevents Studio from spawning the CLI when run from source against a foreign cwd. Filed as #1221 and worked around in this UAT with anagentvPATH shim. Not in scope for this PR — the global-install path used by end users is unaffected.🤖 Generated with Claude Code